Translate In French / Traduire en Francais

The story we relate in this news needed weeks, even months of investigations, and is extremely important regarding the internal architecture of a CPU. In order to understand what it deals with and what are the consequences of our discovery, we'll first browse a quick history of the internal cycles counter of a CPU, also known as Time Stamp Counter (TSC).

The TSC was first intoduced by Intel on the Pentium ; its aim is to count the number of CPU cycles during a time period. The TSC is a counter that is set to 0 when the CPU starts, and is incremented every clock cycle. It can be read through an MSR, or using the dedicated instruction rdtsc (Read Time Stamp Counter). Therefore, a 200MHz Pentium will increase this counter 200,000,000 times per second, and a 3.8GHz Pentium 4 will increase it 3.8 billions times in one second. The counter is stored in a 64 bits registers, that allows, at 4GHz, to store the counter value without an overflow for 146 years of uninterrupt use, without rebooting.

The TSC is used by lot of programs, and in several aims. First it is commonly used to compute the CPU internal frequency : the program reads the TSC, waits a known amount of time, reads the TSC again ; the TSC difference gets the number of cycles elapsed in this time period, and then allows to deduce the CPU internal clock speed. Doing this regularly allows to detect the variation of the CPU clock speed in real time, for example on a mobile CPU that uses a clock modulation mechanism. Besides, the TSC can be used for performance tests, as it allows to get the exact number of cycles to compute a piece of code. This is used by most benchmarks, that basically use the following method to measure the performance of a system : FLOPS = Number of operations / time The number of operation is provided by the TSC, and the time is obtained with the PCs system clock.

What change did Intel introduce ? Basically, the TSC may not be incremented according to the CPU internal clock speed. Some explanations : The Prescott Pentium 4, since its F41 revision, uses a clock modulation mechanism in order to reduce its consumption : the C1E state. This mechanism allows to reduce the CPU multiplier down to 14x when idling, namely when the CPU is not used. So doing, it reduces the consumption and the dissipated heat. Then, the 6xx Pentium 4 introduced a more advanced clock modulation mechanism, the EIST, that changes the CPU clock multiplier and voltage according to the CPU utilization. At that point, we noticed unexpected behaviours in detection programs, like shown here :

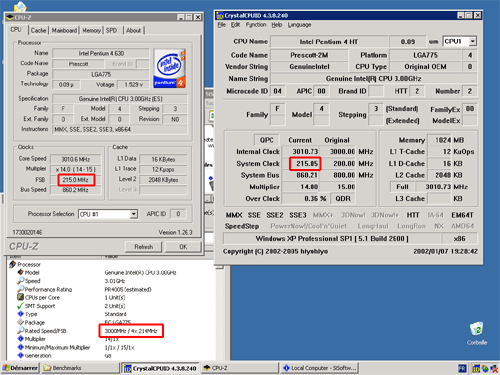

Here is the example of a Pentium 4 630 system, with default BIOS settings, running a freshly installed OS. We used CPU-Z 1.26.3, Sandra 2005, and the latest version of CrystalCPUID : 4.3.8.240. Notice that all these programs seem to detect a CPU frequency of 3GHz, but display a wrong FSB of 215MHz and a 14x multiplier, when the real numbers are 15 x 200MHz. Strange ! The way these program work is the following :

- Use TSC to get the CPU internal clock speed

- Read the multiplier in a MSR register

- Divide the frequency by the multiplier to get the FSB.

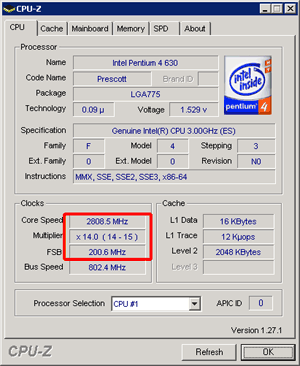

Nethertheless, these values are obviously wrong. The problem either comes from the TSC or from the multiplier, but the multiplier is correct, as its changes matches the behaviour of the C1E state (14x when idling). In order to figure out what was the problem, we used CPU-Z 1.27, that uses a different way to compute the CPU clock speed, without the TSC. The result is the following :

Bingo, the frequency is now 2.8GHz in idle mode, and the FSB is correctly reported as 200MHz. So, why did all previous program return 3.0GHz when the real CPU clock is 2.8GHz ? Because the TSC is not incremented according to the real CPU frequency anymore. In this case, the CPU is running at 2.8GHz BUT the TSC is still incremented at 3GHz. Nethertheless, this problem is hard to see in normal utilization, because any CPU load restores the maximum multiplier and therefore the 3GHz frequency. So, we used a small tool to force the multiplier to be set to its lowest value, whatever the CPU load is. This tool also displays the CPU clock speed using two different methods : one uses the TSC, and the other one is the same as the one used in CPU-Z 1.27.

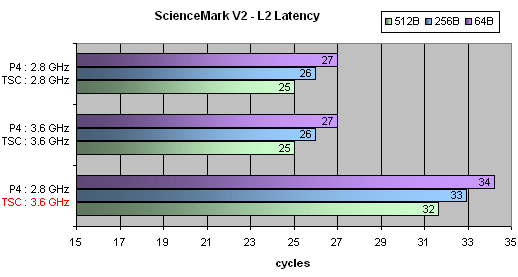

We clearly notice there that the TSC is incremented at a frequency that is not the real one, that causes all software that use the TSC to get a value that does not match the real CPU speed. As we mentionned above, benchmarks program use the TSC, and reading back a wrong counter value results in getting wrong results ! In order th show that, we used the L2 latency test of ScienceMark 2. This test measures the cache latency in clock cycles, and the result is based on the CPU architecture only, that means that it only depends on the CPU model. Consequently, all Prescott based CPUs return the same value, whatever their clock speed is.

As the result show, the latency is the same at 2.8 and at 3.6GHz, but when forcing the multiplier to 14x, it changed, because the TSC keeps running at 3.6GHz. This reliable benchmark is fooled. What are the consequence of this "new" behaviour ? - Obviously, benchmark can be fooled. As we mentionned, the effect in normal conditions is very hardly noticeable, as the CPU may operate at its maximum multiplier. But what about if the CPU is throttling ? If the CPU uses TM2 thermal management (that decreases the frequency in case of overheat), the multiplier will decrease, but the reported frequency won't change. The user may not even notice that his CPU is throttling. - If the TSC is not incremented regarding the CPU frequency, what is its aim ? Having a fixed frequency timer ? All timers on the PC are already based on a fixed frequency, the TSC is (was) the only exception. If the TSC does not its job, it looses its interest and becomes useless. Digging more deeper, we noticed that, on the Prescott F4x CPUs, the TSC already uses the boot multiplier as a reference, and stays being incremented at this frequency, regardless to the changes that can occur thought C1E or EIST. We tried to contact Intel to get more information, and to get an explanation regarding this change. As often with Intel, we obtained no response, regarless to the fact that this problem concerns CPU that are already sold for a couple of months. Then we posted on Intel's developer forum here. Intel's answer is a nice workaround : "The answer to this includes Intel confidential information, so we are unable to post the resolution to this board." We obviously won't get our answer. So, we can only try to guess why Intel made this change :

- Fool benchmarks ? Very unprobable, as the cheat would have appear one day. And when used in normal conditions, the problem does not affect the results.

- Hide the real CPU frequency ? As the use of clock modulation mechanisms tend to generalize, the CPUs tend to display frequencies that are below the specification they were sold for. For example the Pentium M speeds lot of its time at its lower mutiplier, that does not affect its global performance at all, but may cause troubles among users. On a communication point of view, always display the stock frequency will avoid lot of questions from users.

- Limit overclocking ? With two clocks running at differents speeds, one at max "rated" speed and one at real speed, that will be easier to prevent the real clock to goes x% higher than the rated speed.

- Another technical reason ? We already know that Intel plans to make great changes in the clock management of the dual cores CPUs. Each core should indeed be able to run at its own clock speed, and both could be different. In this case, the TSC would be incremented at the same speed, whatever the individual speeds are. This could make things easier, but in this case why use this feature in single core CPUs ?

Whatever the reason, Intel does not want to give explanations, and did not think it would be relevant to mention this change in any publication, or even among developers. After we found this, Intel answered as follows :

---------------

The current PRM does not include a complete description for the latest Intel(r) Pentium(r) 4 Processor TSC operation. Intel is currently working on a clarification of the Programmers Reference Manual (PRM) in relation to, but not inclusive of, the following points.

For Intel(r) Pentium(r) 4 Processors with CPUID (Family, Model, Stepping) greater than 0xF30 the designed implementation of the TSC is for the counter to operate at a constant rate. This was implemented due to a request from Operating System Software vendor(s). That rate may be set by the maximum core-clock to bus-clock ratio of the processor or may be set by the frequency at which the processor is booted. The specific processor configuration will determine the exact behavior.

This constant TSC behavior ensures that the duration of each clock tick is uniform and supports the use of the TSC as a high resolution wall clock timer even while the processor core may change frequency. The use of the TSC as a wall clock timer has effectively been prioritized over other uses of the TSC. This is the architectural behavior for the TSC moving forward.

To count processor core clocks or to calculate the average processor frequency Intel recommends using the PMON counters Monitoring data from the event counters over the period of time for which the average frequency is required. See PRM Volume 3 Chapter 15 Debugging and Performance Measuring,Section 15.10.9 and Appendix A Performance Monitoring Events for details on the Global_Power_Events, event.

---------------

In a word, Intel agrees about the change in the TSC behaviour on the Prescott CPUs line, and will update the documentation in this way. We think that this update should have been made in the same time that they released the Prescott, and not one year after. In addition, this change was, according to Intel, motivated by the requests of OS vendors (namely Microsoft, who else could have such an influence on Intel's chips design ?). Very convenient and impossible to check. The real reason is still a (marketing or technical?) mystery.